It’s that time again: presidential election forecasts are cropping up left and right. And they all point in the same direction, with varying degrees of confidence. Until the election, there will be arguments over which model is “best”—arguments completely uninfluenced by the participant’s own political leanings, of course. After the election, assuming we still have a country, a much smaller group of people will want to look back and decide which model ultimately was closest to the actual results.

This sounds like a straightforward exercise. The hard part, after all, is predicting the election before it happens. Afterwards, all we have to do is see who called the most states correctly. Right?

In fact, evaluating election models is a tricky business. Most of the ways people have compared models in the past are flawed. And thinking about how to evaluate models can force us to think more clearly about the value of election models at all.

An election model is a polling average plus correlation

When I say “election model,” I’m talking about a probabilistic forecast for the national popular vote along with all subnatinoal results. For presidential forecasts, that’s 51-56 state-ish-level forecasts (depending on if you predict the Nebraska and Maine congressional districts). Some forecasters build these predictions through a bunch of ad-hoc procedures stacked on top of one another; others construct coherent probability models and do Bayesian inference. What matters for evaluation is having access to predictive distributions, however they were derived.

It’s helpful to think of the model in two pieces: the baseline estimate of the expected national and state vote shares, and the model for how deviations from these baselines are correlated across states. Regardless of how the model is built, forecasters have to spend time separately tackling each of these pieces.

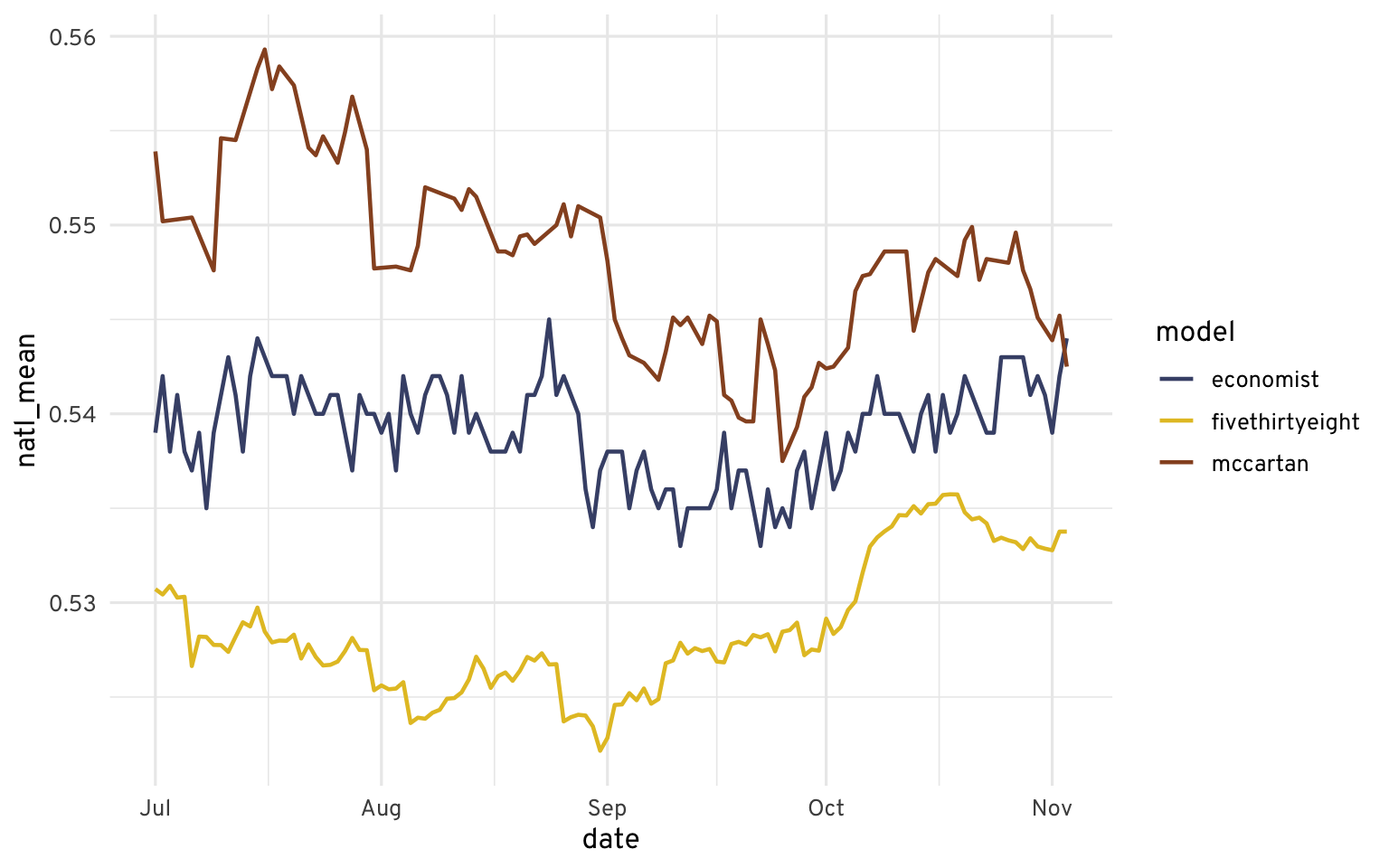

The first part gets the most attention, but it’s hard for forecasters to disagree on. There are good and bad ways to average polls, but for better or worse they will usually produce similar results by election day. Of course, when it comes to competitive national elections, small differences can be important.

The second part usually gets much less attention, but it’s the reason Sam Wang had to eat a bug on national television. He was far from the first or last to build a model that got the state correlation structure wrong, though. Even when modelers think carefully about the correlation structure, there is much less data around for them to estimate it. (Naively, think about estimating roughly 1300 pairwise correlations from a dozen or so presidential elections, then think about how some of those states, like West Virginia, have changed politically over the same window.)

Evaluating Toplines

Electoral College

Evaluating Model Dynamics